Adobe unveils a new AI tool that can detect photoshopped faces

Adobe in collaboration with researchers from the University of California, Berkeley, have developed an artificial intelligence (AI) tool that can detect whether images, videos, audios, and documents have been manipulated using Photoshop software. In other words, the AI tool will help identify fake images, videos, audios, and documents.

“While we are proud of the impact that Photoshop and Adobe’s other creative tools have made on the world, we also recognize the ethical implications of our technology,” said the company in a blog post. “Fake content is a serious and increasingly pressing issue.”

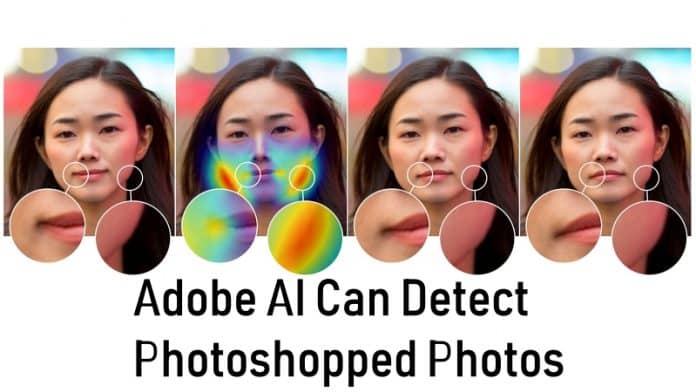

The researchers have published their work in a new paper titled, “Detecting Photoshopped Faces by Scripting Photoshop,” explaining how the AI detects the use of a Photoshop tool called ‘Face Aware Liquify’, which not only can make alterations to facial features, but also can make changes to facial expressions.

“The feature’s effects can be delicate which made it an intriguing test case for detecting both drastic and subtle alterations to faces,” said Adobe.

In order to detect manipulated images, videos, documents, and audio, Adobe trained a Convolutional Neural Network (CNN), a form of deep learning, on a database of paired faces that were modified using the Face Liquify feature of Photoshop. A subset of those photos was randomly chosen for training. In addition, an artist was hired to alter images that were mixed into the data set.

“We started by showing image pairs (an original and an alteration) to people who knew that one of the faces was altered,” says Adobe researcher Oliver Wang. “For this approach to be useful, it should be able to perform significantly better than the human eye at identifying edited faces.”

It showed the altered and unaltered images to some human volunteers to see how good or bad they are to identify which photo was fake. While humans were only able to detect the fake faces 53% of the time, the AI trained on manipulated photos were able to correctly identify 99% of them. The tool is even able to revert altered images to its original, unedited appearance, with results that impressed even the researchers.

“It might sound impossible because there are so many variations of facial geometry possible. But, in this case, because deep learning can look at a combination of low-level image data, such as warping artifacts, as well as higher level cues such as layout, it seems to work,” says Professor Alexei A. Efros, UC Berkeley.

“The idea of a magic universal ‘undo’ button to revert image edits is still far from reality,” Adobe researcher Richard Zhang, who helped conduct the work, added. “But we live in a world where it’s becoming harder to trust the digital information we consume, and I look forward to further exploring this area of research.”

According to Adobe, the AI is a major step towards verifying the validity of digital media created with Adobe tool and to identify and discourage misuse of images to spread fake news.

“This is an important step in being able to detect certain types of image editing, and the undo capability works surprisingly well,” says the head of Adobe Research, Gavin Miller. “Beyond technologies like this, the best defense will be a sophisticated public who know that content can be manipulated — often to delight them, but sometimes to mislead them.”

Also read-