Skynet Redux : This robot hurts people on purpose and makes them bleed

Many AI and robotics apologists base their support for AI on Asimov’s First Law for Robotics.

Asimov’s First Law of Robotics is very clear: Robots may not harm people.

Doomsday predictors including Elon Musk of Tesla have long predicted a Terminator style Skynet domination of world by AI but apologists and robotics supporters have always relied on Asimov’s first law of robotics to defend the innovations in AI and robotics.

Even though there are definitely quite a number of large robots, often used in manufacturing, that one would have to think dangerous, roboticists have largely felled to that rule.

Science-fiction giant Isaac Asimov who penned the “law” in his 1942 short story Runaround, was one of the three rules, the second of which reads, “A robot must obey the orders given it by human beings except where such orders would conflict with the First Law.”

Certainly, accidents involving robots do occur, for instance, when someone gets too close to an industrial robot.

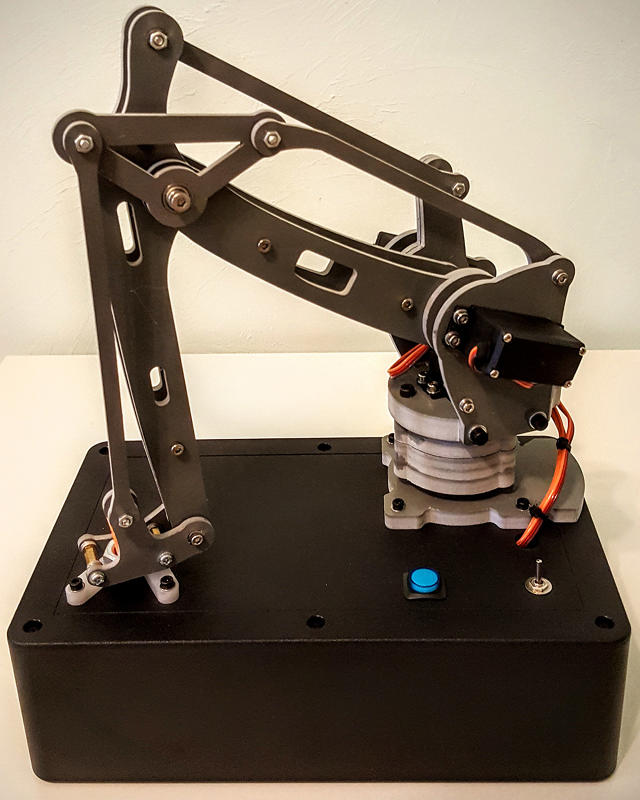

However, now a Berkeley, California man wants to start a healthy conversation among ethicists, philosophers, lawyers, and others about where technology is going and what hazards robots will present civilization in the future. Alexander Reben, a roboticist and artist, has developed a tabletop robot whose only mechanical purpose is to hurt people. Reben hopes his Frankenstein makes people talk.

Before you block your doors and windows, let’s define the terms: The harm caused by Reben’s robot is nothing more than a pinprick, although one delivered at high speed, causing the maximum amount of pain a small needle can cause on a fingertip.

And amusingly, he planned the machine so that injury is caused unsystematically. At times, the robot strikes. At times, it doesn’t. Even Reben, when he reveals his fingertip to danger, has no idea if he will end up shedding blood or not.

In a huge room on the top floor of the beautiful Victorian mansion where Reben lives and works as a member of Stochastic Labs, a Berkeley arts, technology, and science events incubator, claims, “No one’s actually made a robot that was built to intentionally hurt and injure someone. I wanted to make a robot that does this that actually exists…That was important to, to take it out of the thought experiment realm into reality, because once something exists in the world, you have to confront it. It becomes more urgent. You can’t just pontificate about it.”

Kate Darling, a researcher at the MIT Media Lab who studies the “near-term societal impact of robotic technology,” when asked for her opinion about the experiment, she said she liked it, primarily because it includes robots. “I don’t want to put my hand in it, though,” she adds.

Reben is possibly best known as the creator of the BlabDroid, a small, harmless-looking robot that somehow motivates the people it chances upon to tell it stories about their lives. Over the years, his work has been focussed around the relations people have with technology and how that technology can help us comprehend our humankind.

He has been highly aware that people are increasingly afraid of robots—either because they pose some kind of theoretical physical danger to us or because they are seen by many to be trudging toward substituting us. The common refrains these days are “Robots are going to take over, “or” Robots are going to take our jobs.”

Reben wants to compel people to oppose the issue of how to deal with dangers from robots well before they actually occur. Usually, such a job might fall to academics, but Reben believes no research institution could get away with developing a robot that really hurts people. Likewise, no company is going to make such a robot because, he believes, “you don’t want to be known as the first company that made a robot to intentionally cause pain.”

“With increasingly autonomous technology, it might make more sense to view robots as analogous to animals, whose behavior we also can’t always anticipate.”

He says it is better to leave such things to the art world, where “people have open minds.”

It is possible that there won’t be much outrage given that his robot isn’t tearing people’s arms off, or smashing anyone to little bits, at least not the type that would result if his machine was causing severe harm.

Reben hopes people from fields as disparate as law, philosophy, engineering, and ethics will take notice of what he has built. “These cross-disciplinary people need to come together,” Reben says, “to solve some of these problems that no one of them can wrap their heads around or solve completely.”

He visualizes that lawyers will argument the liability problems surrounding a robot that can harm people, while ethicists will think whether it’s even okay to think about such an experiment. Philosophers will ponder why such a robot exists.

However, there is a purpose to believe that Asimov’s laws would never have protected us anyway.

In 2014, Ben Goertzel, AI theorist and chief scientist at financial prediction firm Aidyia Holdings, told io9 that “The point of the Three Laws was to fail in interesting ways; that’s what made most of the stories involving them interesting. So the Three Laws were instructive in terms of teaching us how any attempt to legislate ethics in terms of specific rules is bound to fall apart and have various loopholes.”

Experiment or not, Darling contends that Reben bears the ethical responsibility for any harm instigated by his robot since he is the one who designed it.

“We may gradually distance ourselves from ethical responsibility for harm when dealing with autonomous robots,” Darling says. “Of course, the legal system still assigns responsibility…but the further we get from being able to anticipate the behavior of a robot, the less ‘intentional’ the harm.”

As technology improves, we may have to reconsider the way we look at machines, she believes.

“From a responsibility standpoint,” Darling says, “robots will be more than just tools that we wield as an extension of ourselves. With increasingly autonomous technology, it might make more sense to view robots as analogous to animals, whose behavior we also can’t always anticipate.”

However, for Reben, he just hopes that as autonomous technology advances, people stop sticking their heads in the sand.

“I want people to start confronting the physicality of it,” Reben says. “It will raise a bit more awareness outside the philosophical realm.”

“There’s always going to be situations where the unforeseen is going to happen, and how to deal with that is going to be an important thing to think about.”