Researchers at Google Brain on Thursday announced its response to Meta’s Make-A-Video by introducing its own AI-powered video creation model. Called “Imagen Video AI”, the AI (artificial intelligence) tool can generate videos from input text prompts.

For the unversed, last week, Meta released its video AI generator, “Make-A-Video” which produces videos from text.

“Our work aims to generate videos from the text. We introduce Imagen Video, a text-to-video generation system based on video diffusion models that is capable of generating high definition videos with high frame fidelity, strong temporal consistency, and deep language understanding,” Google states in their research paper.

Google’s Imagen Video AI, which is still in the development phase, was trained with an “internal dataset” of 60 million image-text pairs and 14 million video-text pairs as well as the LAION-400M image-text dataset.

Table Of Contents

How Does Google’s Imagen Video AI Work?

Google says that Imagen Video is a cascade of video diffusion models, which consists of 7 sub-models that perform text-conditional video generation, spatial super-resolution, and temporal super-resolution.

The process starts by taking an input text prompt and encoding it into textual embeddings with a T5 text encoder. A base Video Diffusion Model then generates a 16-frame video at 24×48 pixel resolution and 3 frames per second (fps).

This is later followed by using a series of multiple Temporal Super-Resolution (TSR) and Spatial Super-Resolution (SSR) models to upsample and generate a final high definition 1280×768 (width × height) video at 24 frames per second, for 128 frames ( 5.3 seconds), which is approximately 126 million pixels.

Google claims that Imagen Video is not only capable of generating videos of high fidelity, but also has a high degree of controllability and world knowledge, which makes it capable to produce diverse videos and text animations in various artistic styles.

Further, the AI video tool can learn from image information like creating videos in the style of a Van Gogh painting. It can even understand 3D structures and create videos of objects rotating while conserving the structure.

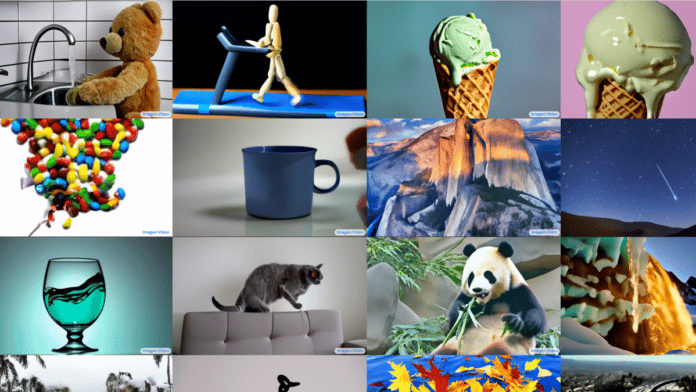

Google shared some demos of videos made from Imagen Video, which includes a bunch of autumn leaves falling on a calm lake to form the text ‘Imagen Video’, a panda bear riding a car, a balloon full of water exploding in extreme slow motion, an astronaut riding a horse, and more.

Limitations

According to Google, while video generative models can be used to positively impact society, these may also be misused, for example, to generate fake, hateful, explicit, or harmful content.

Although the company applies input text prompt filtering and output video content filtering to take care of most of the explicit and violent content in internal trials, there are still social stereotypes and cultural biases that are challenging to detect and filter.

Hence, Google has not decided to release the Imagen Video model or its source code until these concerns are taken care of.